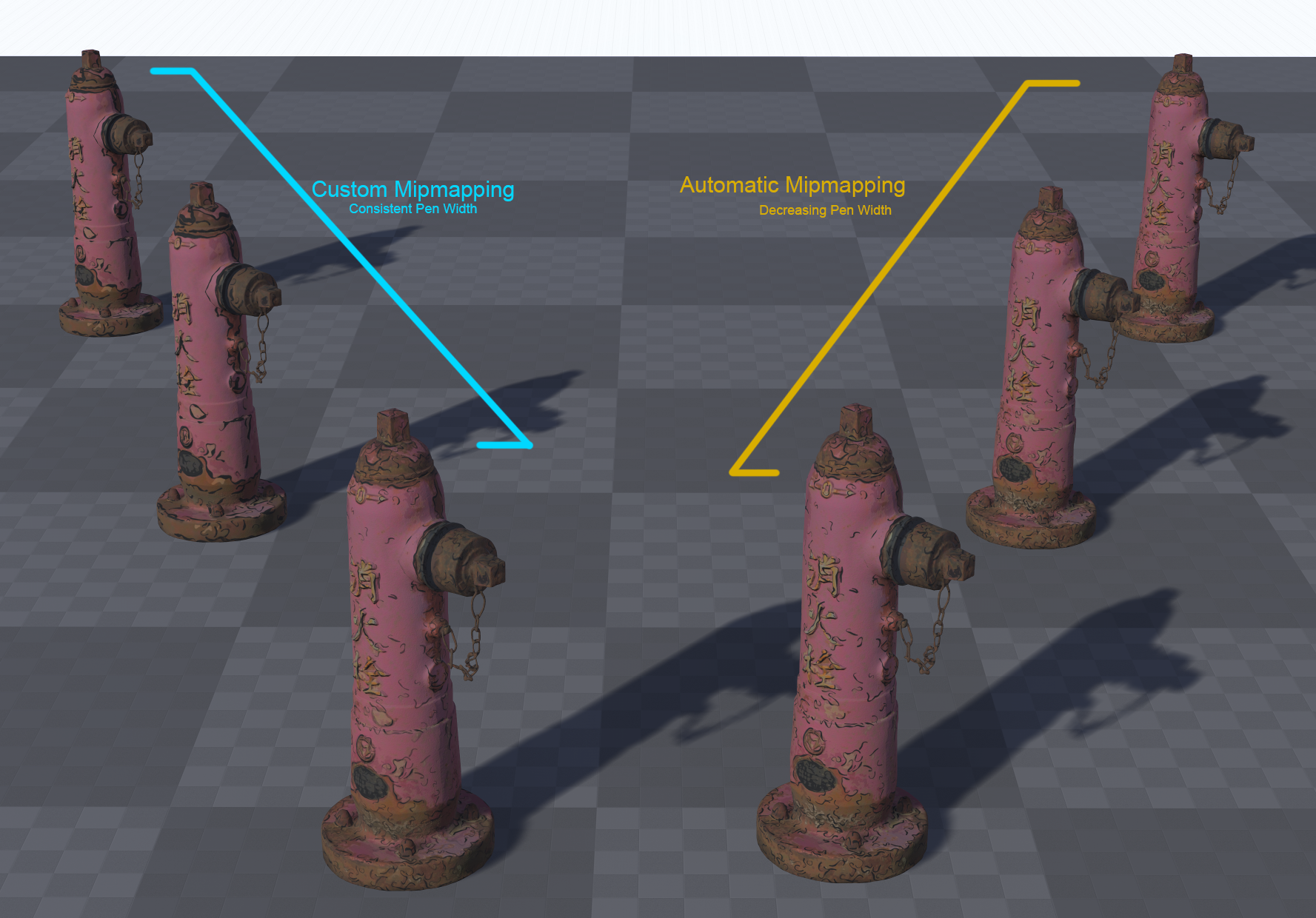

I wanted to research an easy way of creating every MIP level of a texture in Unreal (and any other engine), in such a way that I could create a unique image, or use custom filters or effects at every MIP level. This would let me create several effects, such as: Use custom filters where alpha is involved, such as chain-link fence or chicken wire, so that the wires look realistic as distance scales. Apply custom effects as things scale at distance, cheaply. For example, we could have a hand-draw sketch effect where pen width is more consistent at distance. Use custom filters where alpha is involved, such as chain-link fence or chicken wire, so that the wires look realistic as distance scales. Apply custom effects as things scale at distance, cheaply. For example, we could have a hand-draw sketch effect where pen width is more consistent at distance. In Action Left: Custom, Right: Default The advantage at doing this at a MIP level is that we can use a single texture sample, and the effect with automatically blend seamlessly without having to linearly interpolate between a whole bunch of texture. I tried a whole swathe of tools and techniques, most of which have problems. I’ll cover these quickly, just so you don’t have to go down the same rabbit holes that I had to: Intel Texture Works plugin for Photoshop. This supports MIPs as layers, which is useful in some ways. However, it does not export to an uncompressed DDS (8bpc, R8G8B8A8), which means we cannot import it into UE4, which accepts DDS, but only uncompressed, as it applies compression itself. NVidia Photoshop Plugins. This supports uncompressed DDS export, but it supports MIPs as an extension to the image’s X dimension, in one image. This makes modifying individual MIPs very tricky if you want to automate the process using Photoshop’s Batch feature. NVidia Texture Tools. This doesn’t support MIP editing at all. This leaves NVidia’s DDS Utilities, a legacy set of command-line tools. Turns out, these do exactly what we need, if we combine them with a bit of sneaky Python and DOS commands. NVidia’s DDS Utilities These utilities are just a bunch of executable files (.exe) that take some arguments and can combine images into MIPs for a single DDS. With that all out the way, let’s get started setting it up. Step 1: Prerequisites Getting Python Packages Step 2: Folder Setup Next we’re going to setup our folder structure – we’ll use these as a cheap way of managing the pipeline. One day, I’ll manage it behind a UI, but that’s a lot of work for a niche use-case. We’ll use four folders. “source” for source textures to generate MIP images from. “mips”, a directory to keep these new MIP images in. “dds”, where we’ll keep our files to let us convert our MIPs to DDS “OUTPUT”, for our DDS textures ready for our projects Make this structure anywhere on your PC. From your NVidia DDS Utilities installation path, copy these files into your /dds folder: Step 3: Creating MIP Images Now we can start. The first step is to create a set of images for each MIP level, so we can manually edit each MIP image later. To do this, we’ll use Python and the Python Imaging Library, and a batch file. Head into the /source/ directory and create a new Python file, generateMips.py, and a batch file, genMips.bat. First, let’s do the Python. Open the file in your favourite text editor, or IDE, and type the following: import sys from PIL import Image droppedFile = sys.argv[1] img = Image.open(droppedFile) srcSize = img.size path = “../mips/” name = droppedFile.split(“.”)[0] img.save(path+name+”_00.tif”,format=”TIFF”) mipNum = 1 print(“Loaded “+droppedFile+”, mipping…”) while srcSize[0] > 1 and srcSize[1] > 1: newSize=(int(srcSize[0]/2),int(srcSize[1]/2)) resized = img.resize(newSize,resample=Image.BILINEAR) newName=”” if mipNum < 10: newName=path+name+”_0″+str(mipNum) else: newName=path+name+”_”+str(mipNum) resized.save(newName+”.tif”,format=”TIFF”) srcSize = newSize mipNum=mipNum+1 As you can see, nothing too scary. I simply create a new image file at half the resolution of the previous file saved, until we hit 1×1 pixel. You can choose a different a different resample if you want, but we’ll be manually changing the files, so for now, it doesn’t matter. We take the image as an argument, this is so we can automate it externally if we want to – in our case, I want to make a batch file that I can drop a file on to and it will parse it with the Python script. In the batch file add this: generateMips.py %~nx1 pause This will take whatever we drop on top of the batch file and make new images for each MIP level in the /mips/ directory. Give it a go. Drag and drop Wait a short second… Boom! Images as MIPs! Step 4: Edit MIPs This part is on you. You can edit your MIPs as you like. One suggestion, if you want to use a filter is to create an Action in Photoshop, and use File->Automate->Batch to apply that action and save out. The important thing here is that your file had just one underscore, before the MIP index, for example In this case, I’ve called it normal, but it doesn’t matter. My output goes into the /dds/ folder, this is where you want your modified MIPs. Step 5: Generate DDS In that output, create two batch files – we need two as one is for Linear textures (for masks, etc.) and one for sRGB/gamma corrected for albedo and colour textures. Create two batch files from your text editor/IDE called: Here is the content for GenerateDDSColour.bat: nvdxt -quality_normal -nomipmap -pause -all -u8888 -gamma .\dds_dir set str=%~n1 for /f “tokens=1,2 delims=_” %%a in (“%str%”) do set stitchName=%%a&set sfx=%%b echo.stitchName: %stitchName% stitch %stitchName% md “..\OUTPUT” >nul 2>&1 copy %stitchName%”.dds” “..\OUTPUT” del %stitchName%* pause and this into GenerateDDSLinear.bat: nvdxt -quality_normal -nomipmap -pause -all -u8888 set str=%~n1 for /f “tokens=1,2 delims=_” %%a in (“%str%”) do set stitchName=%%a&set sfx=%%b echo.stitchName: %stitchName% stitch %stitchName% md “..\OUTPUT” >nul 2>&1 copy

Character Clothing – Part 4: UE4 Logic

Table of Contents Character Clothing Zone Culling Character Clothing – Part 2: Maya Character Clothing – Part 3: UE4 Shaders Character Clothing – Part 4: UE4 Logic Blueprints First, create a new Actor Component Blueprint Call it “BP_MeshZoneCulling” and open it up… Next to it, create a Enumeration, call that “ENUM_Cullzones” Open up the Enumerator and add in 24 rows (for UE4, in Unity you’ll have to do this in C# and have up to 32), and check the Bitmask flags option. Name each of the Display Name fields appropriately, like so: The important thing here is the order – it must match the order of your zones in your UV set. Save it and open our Blueprint. In the Blueprint, create a new integer variable and set it to be a Bitmask and the Enum to be your new Enumeration in the Details Panel. Make it public by checking the “eye” icon. Duplicate this for every layer of clothing you have, and the Body: Next, Create a new Actor Blueprint for your character. Add in a new Skeletal Mesh Component for each layer of clothing, and one for your base character. Also add in our new Actor Component. Go back into the Actor Component and add in a new variable for the body & clothing skeletal meshes, like so: Whilst you’re here, add in 4 Dynamic Material Instance arrays to the variable list. To be able to edit our Materials from the BP we need to use Dynamic Material Instances: Back into the Character Actor now, head into the event graph and add in the following logic – it assigns your skeletal mesh components into the corresponding variables in the Actor Component: Return into the Actor Component and hook up the following logic on a custom event “Setup Materials”: Quickly, back into the Player Character Blueprint and hook up your event to run after the Being Play has finished, like so: Finally now – back into the Actor Component. Under Event tick, hook up your Dynamic Material Instances to update every frame: Obviously, you’ll want to run the Event off something other than Tick, but this is just to get you going. This takes the bitmask values you’ve defined and pushes those through to the Materials. You can now put your Actor BP into the level, hit Play and start testing. This should be enough to get you going. You can create Databases in Unreal Engine 4 to store all the culling zones data, and you can expand the logic of the system to sensibly know which areas to hide on the skin and which to not.

Character Clothing – Part 3: UE4 Shaders

Table of Contents Character Clothing Zone Culling Character Clothing – Part 2: Maya Character Clothing – Part 3: UE4 Shaders Character Clothing – Part 4: UE4 Logic Unreal Engine 4 Now we move into the next stage. We now need to do the following: Create a shader than can dynamically cull mesh, based on a bitmask value Create a Blueprint that can send and update the bitmask value, based on user input Create a Blueprint that will propagate bitmask values so that layers of cloths all appropriately cull other meshes The Shader We’ll start by setting up a Material in Unreal that exposes the properties we need for each piece of clothing, then we’ll create Material Instances off this for each piece of clothing/mesh – this is standard practice in UE4. For Unity you would need to make a Shader using this approach, then create Materials using that base Shader for each mesh. Its the same process, just different terminology between the engines. Start off by adding Texture Parameters for each of your texture maps. You can do these however if you wish, i.e. if you have certain features such as re-colouring, etc., then add these in here. Basic Material implementation for the green t-shirt Next, create a Material Instance for every Material you need, then apply it to your meshes. Here I have one Material for the skin, one base material for the clothing – all the clothing materials are instanced from this base material. Next, create a new Material Function, we’ll call this “MF_Cullzone”. In this, create the following graph: In the Custom node, add in the 3 Inputs you can see, then paste this code into the Custom node: if(bitmask > 0) { // 2^32 is the most we can do with a 32-bit signed integer int maxPower = 32; float uvCoordRange = 1.0 / maxPower; int a = int(bitmask); for(int i = 0; i < maxPower; i++) { if(a & 1 << i) { if(UV.x > i * uvCoordRange && UV.x < (1 + i) * uvCoordRange) { return worldPos / 0.0; } } } } return worldPos; This code takes our bitmask value and checks to see if our UV sits within that value. For example, if our bitmask value is 3, this equates to: 0000 0000 0000 0000 0000 0000 0000 0011. We therefore check if our UV is in the first or second zone in our UV mask – UV.x range 0.0 – 0.0625. If it is, we divide its position by 0. The “World Position” input node allows you to pass through an existing World Position Offset, in case you need to for a different effect. Save this Material Function and then hook it up into the World Position Offset node of all your base materials: Now head into your Material Instance and crank up/down that bitmask value: Here’s a quick example of the values working: Now on to the final part: Character Clothing – Part 4: UE4 Logic

Character Clothing – Part 2: Maya

Table of Contents Character Clothing Zone Culling Character Clothing – Part 2: Maya Character Clothing – Part 3: UE4 Shaders Character Clothing – Part 4: UE4 Logic Setting Up We now have an approach we can take. We will now turn this into a practical solution. We first need to find a way assign a zone of our mesh to a bitmask value. For example, if you had a hand you were trying to split into different zones, you may choose one zone for each digit (fingers & thumbs), the top of the hand and the palm of the hand. How can we tell our shader about these zones? In vertex shaders we have access to a few things. Two common ways of attaching data to a single vertex is either using UVs or vertex colors. UVs use coordinates – a pair of values. We only need one for now, so we can grab the x-component of the UVs and use that. We could use vertex colors, but the tooling here would be a problem – it is easy to move a UV coordinate up or down, is is less easy to increase painted values by increments – at least in Maya. So in this case, we’ll stick with UVs. The first thing we want to do is define the base zones to cull. We need to do this in our modelling package. I’ll walk you through how I set things up in Maya: The UV Basics First steps, then. Let’s change Maya’s grid settings in the UV editor to be useful to us. 0.0312, you ask? This is 1/32, as we want 32 grid points in our 0-1 UV space (its actually 0.03125, but Maya rounds it)you can find out more Each of the horizontal zones now equates to a zone we can selectively cull Now we need to create a new UV set. A UV set is called a UV Channel in Unreal Engine 4. It is simply a set of UVs for a vertex. Each vertex can have as many different UVs as you like, and these are store in channels. It is worth noting that the more UV sets you have, the more vertices your graphics card has to process at runtime. For us this is fine, but keep it in mind. Create a new UV set. It can be empty as we’re going to assign new UVs to all parts of our mesh. Start by selecting your mesh, and clicking the following: You should now be able to head into UV Set Editor and see your new UV set: Next we’ll use the following image as a texture. It is just 32 colours, so that as we’re assigning each zone in our UV space we get some clean visual feedback on our mesh: Create and assign a new shader (Lambert or Blinn will do). In its Color value, add a new file node and use the above texture. Turn on textures in your viewport: Next we need to be able to see the second UV set in-use in our mesh (by default Maya only displays the first UV set in the viewport): All our UVs are 0,0 in this second set, so we’re all red. With your second UV set selected, you can now begin UV’ing sections of your mesh. For this process, simply select each zone of you mesh you want to cull. Then create some Automatic UVs for it. Then scale the UVs down as small as you can manage and make sure they fit inside one of vertical grid columns: Then repeat for each zone. NOTE: As mentioned previously, if you’re using UE4, you are limited to the first 24 zones! But which zones? How do I cut this up?! Good question – the quick answer is that its up to you. But there are some things to consider: Your clothing will need to be designed around these zones – the closer you follow the zones with your clothing, the less clipping issues you’ll have Start by looking at your clothing designs and working out the zones you want create Define the zones on your base character first Your clothing will need to be designed around these zones – the closer you follow the zones with your clothing, the less clipping issues you’ll have Start by looking at your clothing designs and working out the zones you want create Define the zones on your base character first Here is how I have approached mine, based on the clothing I’m using (in an ideal world, you split the zones up first, then design your clothing): Each color is a unique zone we can dynamically cull Depending on your clothing you may want to dedicate areas differently. In this example, I’m running just one zone for the neck and face The next step is crucial: We need to apply the same zoning to our clothes. This makes things consistent and means we can layer a jacket on top of a tank top (culling the parts of the tank top we can no longer see), and then add the two bitmasks together and apply that value to the character’s skin mesh – it will hide everything both layers of clothing are on-top of! We need to apply the same zoning to our clothes add the two bitmasks together Here is the zoning on some example clothing: The zoning matches. FWIW: I’d recommend a close topology match between your clothing and characters if you can manage it than I demonstrate here (time constraints, may redo these assets) Final step is to export this out to .FBX ready for Unreal Engine 4. I’ve done this here, you can check your zones have come in properly by setting up a Material as below: Continue on to the next page: Character Clothing – Part 3: UE4 Shaders

Character Clothing Tangent #1 – Bitmasks

A typical computer will store an unsigned integer as a 32-bit value. This means we use 32 ‘bits’ to describe a value. A ‘bit’ is simply a value that can be “on” or “off”, or as you may already be familiar: 0 or 1. Computers store numbers using these bits. We can make any number from a list 1s and 0s by using a simple technique: any 1. We order our bits, remember the index of that bit in our list: 1. We order our bits, remember the index of that bit in our list: weblink 2. For any index (i) that is greater than 1, we perform the operation: 2i, which we’ll call our “binary” value: i i 3. Wherever we have a “1” in our “bit” value column, we will add the “binary” value to a total: 001011 = 4+16+32 = 52 001011 = 4+16+32 = 52Bits are always stored right-to-left, so let’s flip the bit-order to be correct and add in some more examples: If we extend this to 32 bits, we can make large numbers:00000000000000000000000000000001 = 101101010010010001001001110101001 = 1,783,141,289 That’s how we store values – but we can also look at these a bits a different way… store store Each bit can also be an entry in our list of “on” and “off” values. For simplicity, we’ll go down to using 4 bits. Let’s take 4 things in an imaginary 3D scene. Cube Cylinder Sphere Torus Cube Cylinder Sphere Torus Let us now represent each entry in that list as either a 0 or 1, depending on whether we want to hide them: Hiding just the Sphere would give you a list of bits as 0010, where the third bit value, “1”, tells us we want to hide the third item in the list: the sphere. just 0010 We can then store this bitmask as an integer – a single number. In this case, hiding just the sphere would be an integer value of “4”. If we hid just the Cube, it would be “1”. If we used an integer of “5”, we would hide the Cube and the Sphere! and This is useful as we can pass such an integer value into the shader and work out exactly what vertices to hide.

Character Clothing Zone Culling

Table of Contents Character Clothing Zone Culling Character Clothing – Part 2: Maya Character Clothing – Part 3: UE4 Shaders Character Clothing – Part 4: UE4 Logic Introduction When developing characters with multiple layers of clothing, it is very possible you’ll end up with this sort of issue: As you apply layers of clothing, you may find that the layers intersect and clip. What you’d really like this: A common approach to solve this is to develop all the mesh combinations you need as separate meshes, like so: Each of these is a separate mesh This can lead to restricting clothing designs and shapes and colossal amounts of geometry files to cope with all the variations. But there is a better way! One solution I’ve found particularly interesting is how Star Citizen handle it. They break their clothes down into sections that can each be toggled on/off as needed, based on the combination of clothing applied. I will now show you how to implement such a system in Unreal Engine 4 (though the approach is completely doable in Unity, too). This system will enable you to layer up clothing and hide any sections of those layers so that they no longer clip. The system is flexible and supports, in theory, an infinite amount of culling zones (although, that might become unmanageable from an artists’s workflow perspective). Performance cost should also be improved. Although all your vertices will be processed, they won’t need drawing. As a result you’ll have less to draw, less to light and less to process overall. Requirements We’ll need a few things to get started. Unreal Engine 4. Any recent version should do A modelling tool. We’ll be using Maya here, but as long as you can have multiple UV sets/channels, you’ll be good Preparation We’ll introduce you to a few topics in this tutorial that will make this process possible. First off, we need to figure out how to cull parts of the mesh dynamically in a nice, optimised & efficient way, then we need to figure out how to define an area to cull and finally implement this in a nice way in Unreal Engine 4 so that an artist can control, per piece of clothing, which sections to hide underneath. In order to figure out our best approach we need to consider the way we want to author the clothing content. The desired workflow: We want an artist to make a item of clothing, such as a leather jacket, a t-shirt or a vest as they normally would. Import those as individual items into the game project. Layer up the clothing. Then, be able to configure each layer item with a value that tells our shader what parts of each layer to mask (all demonstrated in the Star Citizen video) Some Theory As discussed, it isn’t practical to pre-calculate variations of a mesh with every possible other combination of clothing layering. We need to be able to cull dynamically within a mesh’s vertex shader. For culling, there’s a small feature within vertex shaders we can use to our advantage: If a vertex’s position is either infinity or not a number (referred to as “NaN“), the renderer will just cull it. So, if we know which vertices the hide, we’ll be able to do that within the shader. Dividing the vertices’ position by 0 will do just fine: if(cullThisVertex) { // Dividing by 0 is a great way to break a value // This vertex will no longer be drawn vertex.position = vertex.position / 0.0; } We know how to cull the vertices – but a larger problem is still looming: How do we know which areas to cull? So, time to figure out how to define those areas to cull in the shader. We want a value we can provide to the vertex shader that will know how to cull each zone. This is where bitmasks come in. A Short Introduction to Bitmasks: This part is heavy. Basically bitmasks allow us to manipulate the way computers store numbers in such a way as we can create a list of things to show/hide. Tangent #1 – Bitmasks There are two things to keep in mind: Modern consumer-grade GPUs only support 32-bit unsigned integers. This means that for each material on our character/clothing, we can only have 32 zones for culling. If you’re using Unreal Engine 4, you will be limited to 24 zones. This is because you can’t pass integers, or unsigned integers to a Material without modifying the Engine – you can only pass floats, which are stored differently in memory than integers. You can get around these limitations by breaking your meshes up. For example, separate the head, upper and lower-parts of your body. This means for each of these, you get your full zoning allocation. For this to work, you can’t have single clothing pieces across these separations, such as with overalls or jumpsuits without additional work outside the scope of this tutorial. Continue on to the next page: Character Clothing – Part 2: Maya

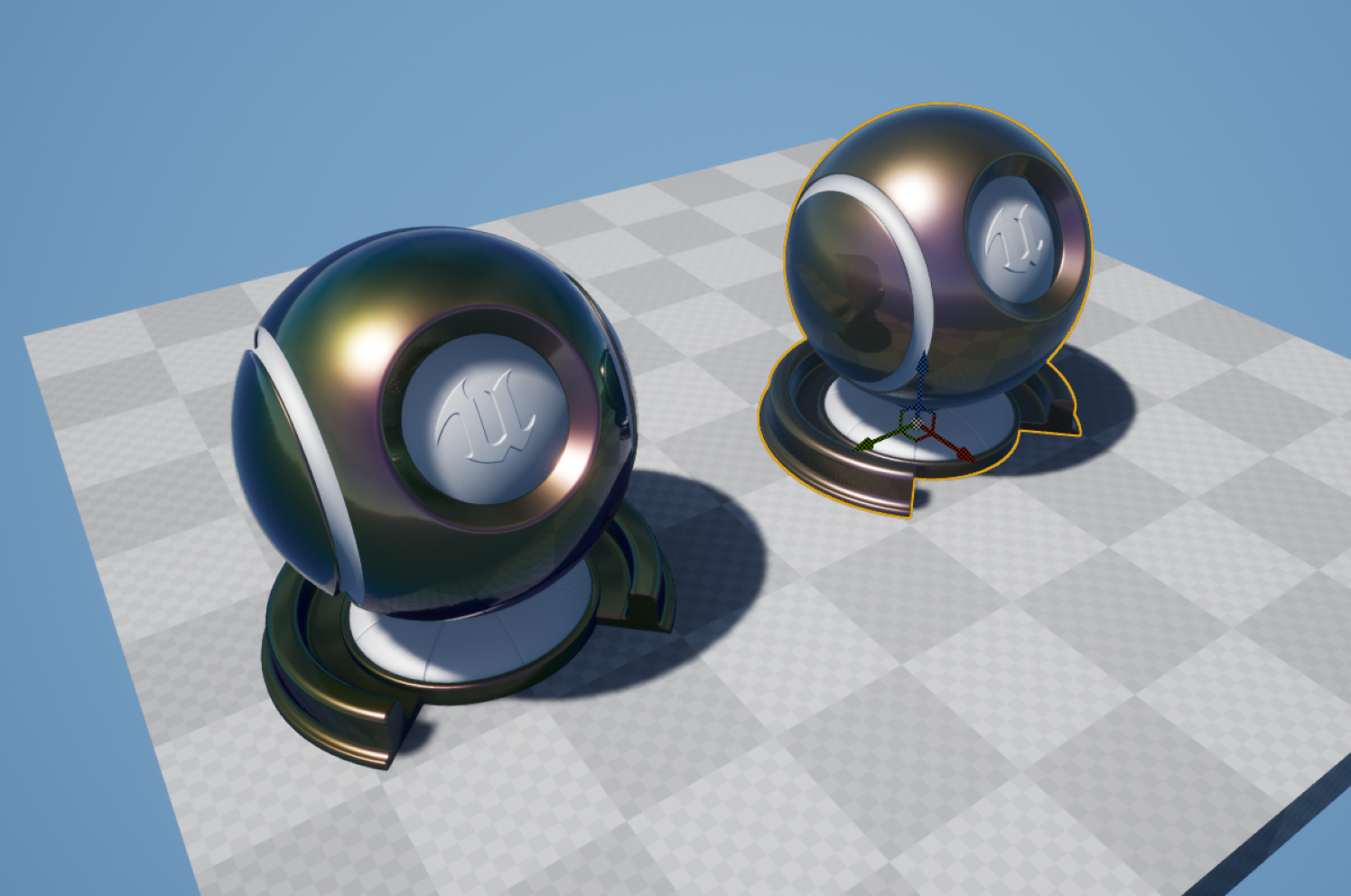

Approximating Thin Film Interference

Thin Film Interference is an optical effect caused by the constructive & destructive interference of light waves as they pass through a thing medium. This only occurs when the thickness of the film is in the range of visible light (so 380nm-780nm, approximately). This video is marginally tangential, but explains the basics well: The paper is fantastic, and the results have since been implemented in Unity & UE4. However, the shader is expensive. Whilst it is a practical and wonderfully accurate shader, it would not be something I would suggest for lower-end platforms. Realtime thin film interference is heading towards bad.Data Extraction What I wanted to do was to find a reasonable way of approximating the colous and results from the shader, but at less expense. approximating The first step is to extract the effect to individual planar tiles, so that we can break down what the physical values in the shader produce in isolation. The next step was to make the inputs for Dinc (thickness), n2, n3 and k3 based off UV coordinated and world space coordinates – that way, I can arrange planes in a grid to use as a look-up texture. For this example, I fixed the normal to be (0,0,1) and the light direction to be (0,0,1). inc 2 3 3 And this is the scene setup – each one of these is a plane, set to 16.0 scale in the X-axis. They tile across the +X and -Y axis. I then took a High Resolution Screenshot of this to create a look-up texture, like so: As you can see, the changes in brightness and saturation all vary based on the input properties – it appears subtle, but it adds up across the range of possible values. There is, however, one more value we can’t fit into a LUT – the viewing angle. This also has an impact on the final colors – I’ve exposed the light direction here to hopefully show the impact it has: Changing the Light Direction Changing the Light DirectionWe can simulate this effect by offsetting our UVs later. I imported the LUT setting ‘No Mipmaps’ and ‘Nearest’ filter, as we want no blurring between the grid cells. Approximating I then set it a new Material in such a way that the input values for Dinc, n2, n3 and k3 all correspond to UV coordinates in the LUT – I use a Fresnel function (dot(N,L)) to sample the LUT. Where you see the inputs in the Material Graph, the next few nodes for each are simply remapping the value ranges into UV space. inc 2 3 3 I sample the texture twice for k3 and blend between the two samples, as one sample didn’t give me enough precision. 3 Next, I added in option use a thickness mask, or a thickness value. Finally, to mask the effect, we need to multiply our underlying base color by the thin film color, as the light waves bouncing off the underlying surface will only reflect back with that surface color. We then blend this using a mask, so we can mask out areas we don’t wish to apply the film effect. The full MaterialResults We can now achieve some mightily convincing results: And if you want to really go-to-town, this sort of thing is completely possible: have a peek here And there is also a difference in instructions: from 440, down to 198, and this difference doesn’t even account for the fact that the more expensive shader hasn’t had support for film thickness mask etc. added yet… And And from 440, down to 198 doesn’t even account Shader complexity view: Concluding Notes My implementation isn’t full simulation – its not designed for physically-accurate work. It is, however, close, and more importantly, cheap – enough for mobile/low-end applications if you can handle the additional memory requirements for the LUT. I’ve added all the files for the opaque shader here, for your use (including thickness map): full close cheap –

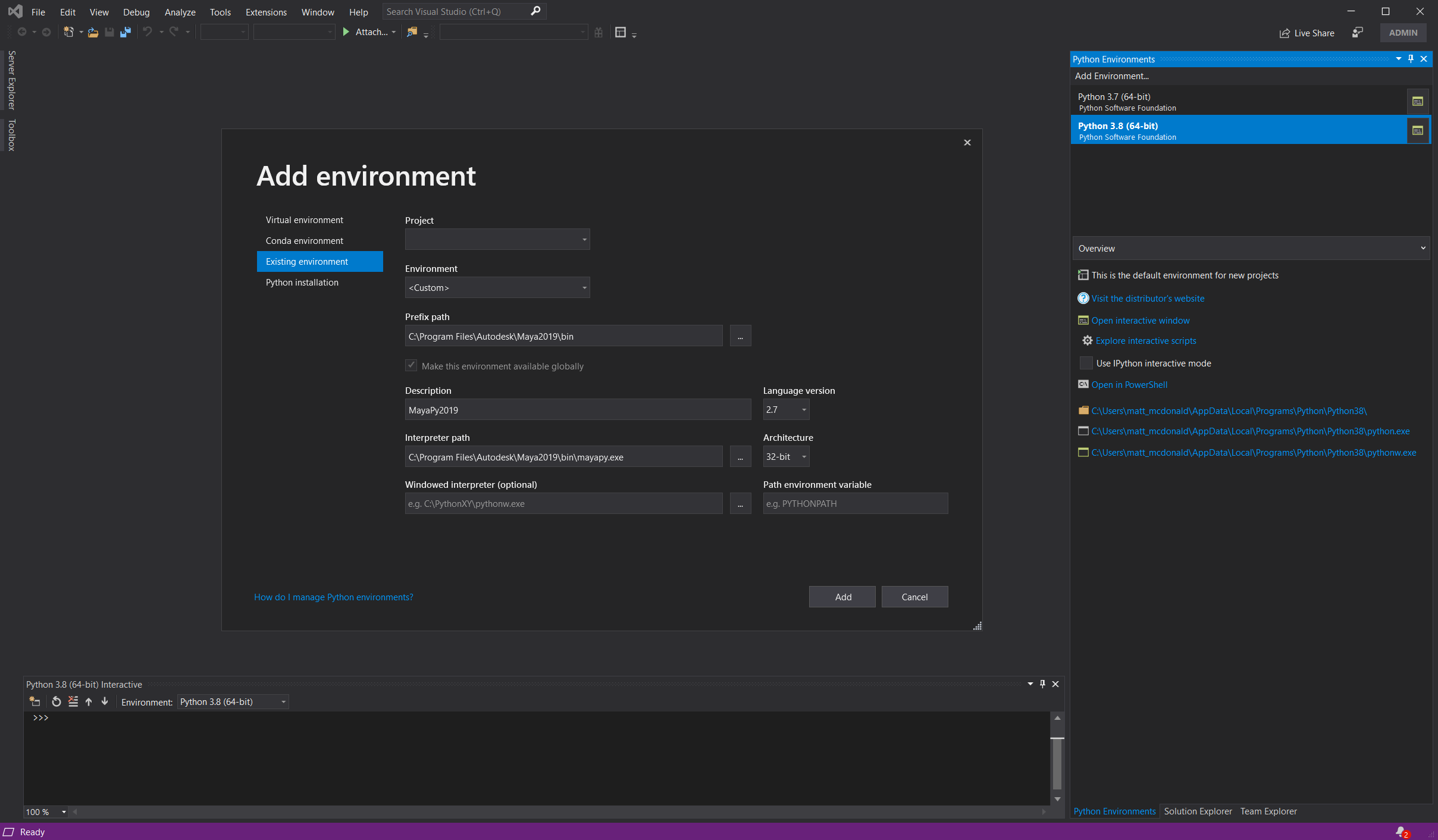

How I Work: Maya, Qt & PySide

[I’m improving this page, WIP] There are plenty of ways to get up to speed with an IDE for developing Maya tools & plugins. Anecdotally, I’ve found not many studios enforce a particular IDE for Maya development, so this is how I’ve usually got mine configured. Prerequisites An IDE – I use Visual Studio, but VS Code & Eclipse work fine. Getting Started Once you’ve installed those tools, locate where you installed Qt to, and find this application: ~\Qt\Qt5.6.1\5.6\mingw49_32\bin\designer.exe Put a shortcut to this on your Desktop (or wherever). You can run this to build Maya-compatible .UI files Next, run Maya and run this command in Python: import maya.cmds as cmds cmds.commandPort(name=”:7001″, sourceType=”mel”) cmds.commandPort(name=”:7002″, sourceType=”python”) You can, if you wish, add this to you userSetup.py/userSetup.mel file in your Maya scripts path. I also head into VS and add in the Maya Python paths, so you get all the Maya-specific PyMEL commands. You can do this by adding Maya’s python interpreters to a new environment: Open up Designer and make yourself a basic UI, like so: Save this as “MyCustomUiFile.ui” in: C:\Users\<username>\Documents\Maya\<maya version, i,e, ‘2019’>\scripts\ Now, you can use the following termplate to open the UI and hook up events: import os import sys import site from PySide2.QtCore import SIGNAL try: from PySide2.QtCore import * from PySide2.QtGui import * from PySide2.QtWidgets import * from PySide2 import __version__ from shiboken2 import wrapInstance except ImportError: from PySide.QtCore import * from PySide.QtGui import * from PySide import __version__ from shiboken import wrapInstance from maya import OpenMayaUI as omui from PySide2.QtUiTools import * from pymel.all import * import maya import maya.cmds as cmds import pymel.core as pm import pymel.mayautils as mutil mayaMainWindowPtr = omui.MQtUtil.mainWindow() mayaMainWindow = wrapInstance(long(mayaMainWindowPtr), QWidget) windowId = “MyCustomUI” USERNAME = os.getenv(‘USERNAME’) def loadUiWidget(uifilename, parent=None): “””Properly Loads and returns UI files – by BarryPye on stackOverflow””” loader = QUiLoader() uifile = QFile(uifilename) uifile.open(QFile.ReadOnly) ui = loader.load(uifile, parent) uifile.close() return ui class createMyCustomUI(QMainWindow): uiPath = “C:\\Users\\” + USERNAME + “\\Documents\\maya\\2019\\scripts\\MyCustomScript\\UI\\MyCustomUiFile.ui” def onExitCode(self): print(“DEBUG: UI Closed!\n”) def __init__(self): print(“Opening UI at ” + self.uiPath) mainUI = self.uiPath MayaMain = wrapInstance(long(omui.MQtUtil.mainWindow()), QWidget) super(createMyCustomUI, self).__init__(MayaMain) # main window load / settings self.MainWindowUI = loadUiWidget(mainUI, MayaMain) self.MainWindowUI.setAttribute(Qt.WA_DeleteOnClose, True) self.MainWindowUI.destroyed.connect(self.onExitCode) self.MainWindowUI.show() # You can use code like below to implement functions on the UI itself: #self.MainWindowUI.buttonBox.accepted.connect(self.doOk) #def doOk(self): #print(“Ok Button Pressed”) if not (cmds.window(windowId, exists=True)): createMyCustomUI() else: sys.stdout.write(“tool is already open!\n”) That should at least get you going with a basic interface to work from.

Quick Tip: Sort Translucency (UE4)

If you’ve ever made translucent objects in Unreal Engine, or Unity (or just about any modern 3D engine), you’ll have possibly encountered this issue, where parts of your mesh render incorrectly infront or behind of itself. Why does this happen? The UE4 renderer will render all opaque objects to the various GBuffers first. When rendering opaque objects, UE4 writes to a Depth Buffer. The Depth Buffer stores depth information for each pixel (literally “depth from the camera” – a Depth Buffer value of 100 is 100 units from the camera). When rendering a new opaque object we can test the pixel’s depth against this buffer. If the pixel is closer, we draw that pixel and update the Depth Buffer, if it further away (i.e. “behind and existing pixel”), we discard it. Conveniently explained here by Udacity After rendering opaque objects, it then moves on to Translucent objects. The renderer works in a similar way – taking each object and rendering them, checking against the depth values already rendered by the opaque pass. This works fine for simple things such as windows, where you wont get a mesh with faces infront/behind. However, it breaks down under these circumstances. This is because when given a mesh, the renderer tackles the faces by their index. This means it renders the triangle that uses vertices {0,1,2} first, then continues up the chain. This means it may render faces in an order that does not match the order they are in front of the camera. This means some faces are rendered in the wrong order. When rendering opaque objects, this isn’t a problem as every pixel it either fully occluded or not occluded – with Translucent objects, this isn’t the case and we get pixels that stack. This is the same mesh, but with different vertex orders. Note the different pattern of overlaid faces. The CustomDepth buffer works identically to the Depth Buffer, aside from the fact that it only renders objects into the buffer that you define – including Translucent ones. By using this buffer and doing a simple check in our material, we can make pixels that are located behind other pixels in our mesh fully transparent, making them disappear. One caveat: You will not see any parts of the mesh behind itself. I.e., if your mesh was made of two spheres, with one inside the other, you would not see the internal sphere rendered at all. Its a trade-off. There are other solutions to this problem, but this is the simplest and works in most cases. On the left we have the default Translucency. On the right we have sorted Translucency. As you can see, we can no longer see back faces – this may not be what you want. Rendering to the CustomDepth Bufffer Step 1 is to get your mesh and locate the “Render CustomDepth Pass” flag and enable it. Expand Rendering and check this option Secondly, you need to find your Material and enable the “Allow CustomDepth Writes” flag. NOTE: There is a bug here. This flag is ignored if your Material is Opaque, even if your Material Instances are Translucent. To get around this, set your Material to Translucent, and make a Material Instance that is an Opaque version of that – use that for opaque objects, where you wish to share Material functionality. I prefer to work in Material Functions using Material Attributes, but for easy-of-explaining, we’ll just put the logic for the following into the Material directly. Here’s a simple material example. Create a new node for SceneTexture and set the SceneTexture to be CustomDepth: Now we need a few more nodes. PixelDepth doesn’t work here as a comparison for CustomDepth. We need to reconstruct the depth using this method: I believe you could use PixelDepth here, but simply add a tolerance value to it. Untested, but it’d probably work. We feed this into an If node with our opacity and a constant value of 0 The Multiply by 0.999 is important here. This is the threshold – values of 1 or higher will make the mesh completely invisible. Less than 0.999 will create overlapping along edges. Your mesh should now correctly sort!

Part 6: Concluding Notes

Calibrating Skin Textures During the process of making this tutorial, it has become clear that if you plan on using this technique in full production, you may wish to calibrate your skin textures. Having looked at several sets of skin textures, its clear that black skin textures tend to have more noise and blemish detail that white skin. I suspect this is to add some visual detail to skin that is difficult to bring contrast to (as its darker, there are less available color and light values). Either way, converting black skin to be lighter results in more noisey albedo textures than making lighter textures darker – which, conversely seem to lack detail. As such, its probably best to author skin at a relative mid-tone, so when blemishes, freckles, moles, scars etc. are all added, the texture is calibrated well for darker and lighter tones. You will also need to consider using a standardized base skin color on all your characters skin. If you use the same base chromophore value in all your albedo textures, then you can use the same, hard-coded shader value for all characters. In addition to all of this, I have found that, as with the noise, specular reflectance is also commonly an issue. Lighter skin, when darkened, is always significantly more ‘shiny’ than you would expect. Interestingly, dark skin made lighter does not suffer from this problem. Again, I suspect this is because white skin reflects more light generally across its surface, so issues with specular reflectance are not as noticeable. With black skin, bright highlights are instantly more noticeable. As a result I would suggest always calibrating roughness and specular textures on darker skin, and at the very least, testing across a range of skin colors. Going Further Hopefully you’re now clearer on all the topics covered in this tutorial. Its worth trying some other LUTs if you’re feeling like challenging yourself, one may be looking at Chlorophyll A & B, as see here: Some other things you could try is our shader chromophore replacement technique on other things, such as environmental assets or clothing. It should allow you to re-color just about anything, not just skin. References I used many papers and online websites over the course of this, these are the papers referenced & the papers I referred to the most: Monte Carlo Modeling of Light Transport in Tissue (Steady State and Time of Flight) Steven L. Jacques A Spectral BSSRDF for Shading Human Skin Craig Donner and Henrik Wann Jensen A Practical Appearance Model for Dynamic Facial Color Jimenez et al. A Layered, Heterogeneous Reflectance Model for Acquiring and Rendering Human Skin Donner et al Complete Project Files All the final project files are here: <todo: find the link… >